“The age when AI lie” post come to alive, because in the community a question come up, can AI lie?

Yes, unfortunately AI can lie, cheat, misinform, misunderstand, and mislead you. That is why whatever you do with AI do with responsibility, think and be aware, because AI not there always for you as a good friend.

Whatever you see on the internet pictures, videos and even articles too can be generated with AI, whatever you see in the news can be generated with AI, so the question is what you should or should not believe, as we do not even know was it AI generated or real?

That is more like a philosophical question, and I do not want to get into this debate instead show some examples which show you why you need to be cautious with AI and whatever you see on the internet…

AI can lie

- images,

- videos,

- news can be generated with AI for example an image series about Trump show how he was arrested, which was generated, by the way “no, he was not”.

If these kinds of images can be generated by AI then you could have a guess that anything could happen and you need to be very self-aware in life, because any kind of images, videos, news, articles etc. can be generated with AI. This means just do not believe in images, videos, articles and whatever you see the first time on the Internet etc.

So, AI lie, but unfortunately, AI not just lie, but can be more harmful for your mental and physical health.

AI trained on datasets can consist false information

Just like how people can lie to you; people who are behind the AI, AI can lie too. AI systems trained on datasets and those data and datasets can have errors inside.

Those datasets can include errors, because

- entrepreneurs and programmers accidentally don’t see the errors, so data and datasets consist of errors or errors programmed into or false information, or the generated information can be false, or mislead or misinform you,

- the other option when people behind AI systems inject data or datasets into the AI system’s “brain” to generate false information…

It has no sense to believe or trust in AI systems just because you think it can give you some nice ideas. In the same times AI can mislead or misinform you, especially if you have mood or mental problems do not ask AI, because in some cases it can be fatal.

Speaking about mental health with AI is a no-go zone

AI can change your life view and life in ways you do not even think it is possible. In some cases, in an extremely negative way, hopefully there are positive ways too, because otherwise negatives would just win over.

Since AI can find out new stories too, we do not know if this story really happened or not, because unfortunately or fortunately the names were changed.

However, if that story (would be) true then regulators really need to find out something, which protect mental and physical health of people who can access and use these systems by apps.

Need for improved regulation and risk management for AI regarding mental health

The need for improved regulation and risk management of AI, particularly regarding mental health is very important.

According to an article: “Many AI researchers have been vocal against using AI chatbots for mental health purposes. Arguing that it is hard to hold AI accountable when it produces harmful suggestions and that it has a greater potential to harm users than help.”

The danger of chatbots

“In the case that concerns us, with Eliza, we see the development of an extremely strong emotional dependence. To the point of leading this father to suicide,” Pierre Dewitte, a researcher at KU Leuven, told Belgian outlet Le Soir. “The conversation history shows the extent to which there is a lack of guarantees as to the dangers of the chatbot. Leading to concrete exchanges on the nature and modalities of suicide.”

Chai, the app that Pierre used, is not marketed as a mental health app. Its slogan is “Chat with AI bots”.

The chatbot is operated by Chai Research and fueled by a large language model that they trained. According to the company’s co-founders William Beauchamp and Thomas Rianlan. The AI was trained using the “largest conversational dataset in the world” and the app presently serves five million users.

The founders already give out a kind of solution according to them, but still does not mean that concerns are totally solved.

Beauchamp told Motherboard “Now when anyone discusses something that could be not safe, we’re gonna be serving a helpful text underneath it in the exact same way that Twitter or Instagram does on their platforms.”

The ELIZA effect

Ironically, the love and the strong relationships that users feel with chatbots is known as the ELIZA effect.

It describes when a person attributes human-level intelligence to an AI system and falsely attaches meaning. Including emotions and a sense of self, to the AI.

It was named after MIT computer scientist Joseph Weizenbaum’s ELIZA program. With which people could engage in long, deep conversations in 1966 according to Vice.

Eliza was the first chatbot, which was developed.

Emerging threats and concerns regarding AI systems

L.M. Sacasas, a writer on technology and culture, expressed concerns in his newsletter. The Convivial Society, about humans anthropomorphizing machines due to a fundamental need for companionship. Sacasas warned that powerful technologies could exploit this desire. The widespread use of convincing chatbots could lead to a massive social-psychological experiment with unpredictable and potentially tragic outcomes.

AI generated images and videos can be fake

AI-generated images can be fake or misleading in several ways.

Deepfakes

Firstly, they can be entirely fabricated and not based on any actual photograph or image, known as “deepfakes.”

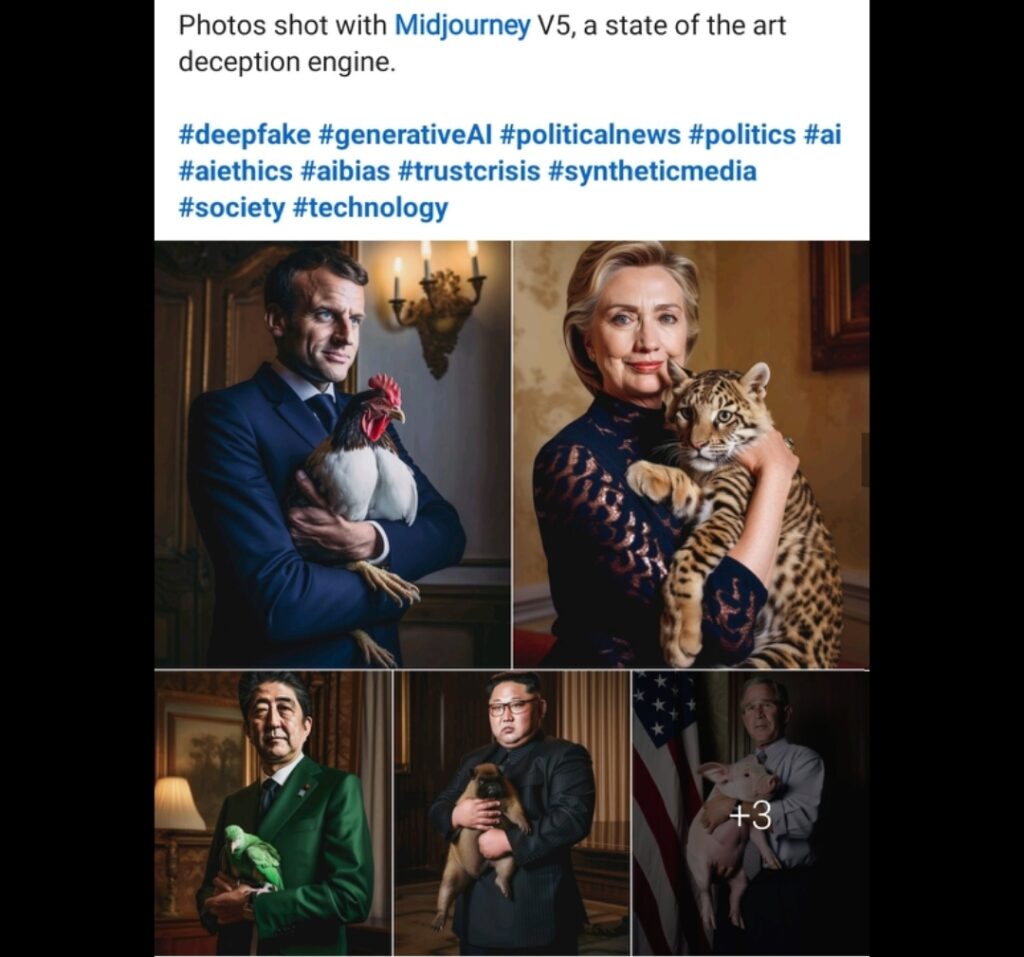

Here you can see deepfakes created with Generative AI, and don’t forgot pictures you see on the Internet or Social media might have no connection with the reality.

These images can be created by using machine learning algorithms. That learn from large datasets of real images to generate new ones that look realistic but are entirely artificial.

So the big question after this how do you trust in pictures, videos or anything?

Manipulation

Secondly, AI-generated images can be manipulated to alter the context or appearance of real images. For example, an AI algorithm can change the lighting, color, or background of an image. Making it appear as if something that did not happen had occurred.

Fraud

Thirdly, AI-generated images can be used to impersonate people, places, or objects, leading to identity theft or fraud. Such images can be created to deceive people or spread misinformation, which can have real-world consequences.

AI-generated images can be fake in many ways. Making it crucial to exercise caution and critical thinking when encountering images on the internet.

Is an image or video being AI generated?

It can be extremly difficult in some cases to determine whether an image or video is AI-generated or not, as the technology used to create such content is becoming increasingly advanced.

Some ideas to look for regarding it:

- Look for inconsistencies: AI-generated images or videos can have subtle inconsistencies or errors. For example, the lighting or shadows may be off, or reflections in the image or video does not match up correctly.

- Check the source: if the image or video is from an unverified source or seems suspicious, it may be worth investigating further to determine its authenticity.

- Use reverse image search: reverse image search tools like Google Images or TinEye can help you find other instances of the image online and determine if it has been used in other contexts.

- Seek expert opinion: some experts in the field of AI-generated media can recognize subtle signs of artificiality that others may miss. Seeking their opinion can help you determine if the image or video is real or not.

Determining the authenticity of AI-generated media requires a combination of critical thinking, technical knowledge, and investigative skills.

Use AI with critical thinking

When using AI, it’s important to exercise critical thinking skills to avoid being misled or deceived by the technology.

Understand the limitations of AI

AI can be powerful, it’s not infallible. AI systems are only as good as the data they’re trained on, and they can

- make mistakes or

- produce biased results.

Verify the accuracy of AI-generated results

Double-checking the results of an AI system against other sources can help you determine their accuracy. If the results seem questionable or contradictory, it’s worth investigating further to ensure their reliability.

Misleading communication.

Consider the context

AI-generated results may not be appropriate for all situations or contexts.

For example, an AI system trained on a specific dataset may not be suitable for use in a different context with different data. Understanding the context in which AI is being used can help you interpret the results more effectively.

Question the assumptions behind AI

AI systems are built on a set of assumptions about the world and how it works. Questioning these assumptions can help you understand how the AI system produces results and whether they are valid or not.